Features

AG Tech

Using UAV-based Sensors for high-resolution crop monitoring

Technology provides more timely and accurate identification of crop stressfor improving precision agriculture applications.

November 16, 2022 By Donna Fleury

UAV flying over an irrigated field in Saskatchewan.

Photos courtesy of Phillip Harder, USask Centre for Hydrology.

UAV flying over an irrigated field in Saskatchewan.

Photos courtesy of Phillip Harder, USask Centre for Hydrology. Like many other sectors, the ag industry is continuing to adopt the use of drones or unmanned aerial vehicles (UAVs) for field scouting and monitoring. Researchers and industry are interested in evaluating emerging sensor technologies that can enhance crop health observations and better inform precision management decisions.

Researchers at the University of Saskatchewan have a three-year project underway to provide the next step in precision agriculture by integrating additional data from UAV-mounted sensors. They are evaluating emerging sensor technology not currently or commonly used in precision agriculture, such as thermal, multispectral and other detection sensors. The project will use UAVs equipped with LiDAR (Light Detection and Ranging) to remotely sense multiple indicators of crop biophysical status for common crops in Saskatchewan including wheat, canola and pulses. The UAV data will be validated with on the ground in-field observations.

“Our goal is to demonstrate tools and develop specific recommendations for farmers, agronomists, and crop specialists to use their own high-resolution UAV or satellite data to quantify and understand the variability of plant status at the field scale,” explains Warren Helgason, associate professor, civil, geological, and environmental engineering, at the University of Saskatchewan (USask). “We are trying to be ahead of the technology curve by using specialized equipment not readily available, including emerging sensors, specialized cameras and UAVs, to develop practical techniques for agronomists and farmers to understand the variability of plant status at the field scale. These emerging techniques hold great promise for agricultural applications, but there have not been any systematic evaluations of these approaches in the prairie context. Our research will inform more practical approaches and provide more confidence for agronomists and farmers when using their own high-resolution UAV or satellite data in the field.” Helgason is collaborating on the project with Phillip Harder, research associate and drone pilot with the USask Centre for Hydrology. The Smart Water Systems Lab, a facility of the Centre for Hydrology, maintains and provides the UAV and sensor fleet to make this project possible.

Helgason adds that one of the interesting technologies is UAV-mounted LiDAR sensors to remotely sense multiple indicators for field scouting and monitoring. LiDAR is a commonly used remote sensing method to measure surface topography of an area using a sensor mounted on a larger aircraft. “What is new is the ability to collect measurements with UAV-mounted LiDAR sensors at a very fine spatial resolution, on the centimeters scale. In terms of crop characteristics, that means we can collect crop height, density, leaf area and develop a 3-D representation of the plant canopy that gives us a good indication of crop health at extremely high resolutions. Although this information won’t tell us why there are differences, it does show the variation across the field during the growing season. We are also combining LiDAR with several other sensors to provide a snapshot of how plant health might vary in a field, and identify areas that may need a closer look during field scouting due to fertility or salinity issues. We will be able to develop high resolution maps showing spatial variability of the crop health status, which can also be correlated with combine yield maps where available and provides another level of information.”

Crop health mapping with UAVs or satellite imagery has mainly focused on the normalized difference vegetation index (NDVI), which provides a relative indication of crop vigour based on the relative proportion of infrared and visible (red) light that is reflected by the plant. However, there are issues with NDVI that can reduce its utility, such as ground exposure during early growth stages, interfering signals from weeds, signal saturation from dense canopies, and most significantly, it cannot be used during the flowering growth stage of canola. Adding these new sensors and LiDAR capability to UAVs is expected to be able to overcome those issues. LiDAR can measure crops and complete canopy whether they are actively growing or not, providing a full picture of plant health and biomass even for canola or any other crop.

The project involves field investigations using repeated surveys on a two- to three-week frequency during the growing season with a UAV carrying a suite of sensors to quantify field scale phenology. Researchers are evaluating both commonly used remote sense products as well as emerging sensors and techniques that have promise, but are less commonly used in existing commercial operations. These will be based on the ability of the metric to provide accurate insight into plant health and yield potential, as well as the practicality and financial costs of implementation. UAV-mounted sensor technologies of various costs and complexities included in this study are: Red-Green-Blue (RGB) camera; multispectral sensor; hyperspectral sensor; and thermal sensor. Other sensors such as infrared temperature sensors are becoming more available and less expensive, however they are still a bit tricky to use. Factors such as time of day and environmental conditions and their impact on data collection will also be evaluated.

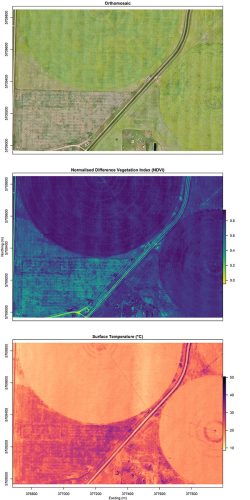

Example of sensor field data collected from a UAV over an irrigated field near Broderick, Sask.

The UAV field data is being collected from producer-managed fields during the growing seasons of 2022 and 2023. The specific crops targeted include spring wheat, barley, canola, and a legume, either lentils or peas. Pre-growing season LiDAR data is collected to measure bare-ground surface topography and peak snow depth for comparing soil moisture related differences in crop health status. In-field sampling and data collection for various soil parameters and crop height will be completed for ground truthing and validating the UAV sensor data. Helgason has also added an irrigated field into the project, including the testing of a thermal radiation surface temperature sensor, which collects data that relates to the surface temperature of an object and can be used to detect water stressed areas. Field areas with adequate moisture and are actively transpiring appear cool, while stressed areas will appear much warmer.

“Once we have collected the data we will develop actionable information for developing a set of recommendations that can guide the use of remotely sensed crop health indicators,” Helgason says. “Essentially we are working on the more complicated aspects that will inform practical approaches and take out some of the uncertainty around using these sensors for decision making. We are actively trying to develop a workflow that can be useful and simple for agronomists and farmers to adopt and speed up decision making. This will help farmers and agronomists have more confidence in the data and information being produced. Using UAV-mounted sensor technology does save time and gives us information we wouldn’t otherwise have, but it is not a silver bullet. There is still a need for boots on the ground to ground-truth what the data is telling you, and also to do some sleuthing to determine what is causing an issue.”

“Ultimately we aim to identify the most suitable remote sensing technologies for precision agriculture applications in Saskatchewan,” Helgason adds. “We want to help users take whatever drone and

sensor package they have and to be able to maximize the use of the data collected. This will allow for more timely and accurate identification of crop stress and improvement of precision agriculture applications.” The final data analysis, recommendations and communication of results will be completed in the final year of the study, ending March 2025.